Product Designer shaping trustworthy AI and complex digital systems

I design user-centred products where strategy, UX, and real-world constraints meet.

I turn ambiguity into product decisions. I connect user problems to business outcomes and design systems that reduce friction, improve clarity, and drive adoption.

Selected Work

Projects showcasing how I combine product thinking with design to solve real problems: navigating complex systems, building trust in AI, and creating experiences where technology amplifies human judgment.

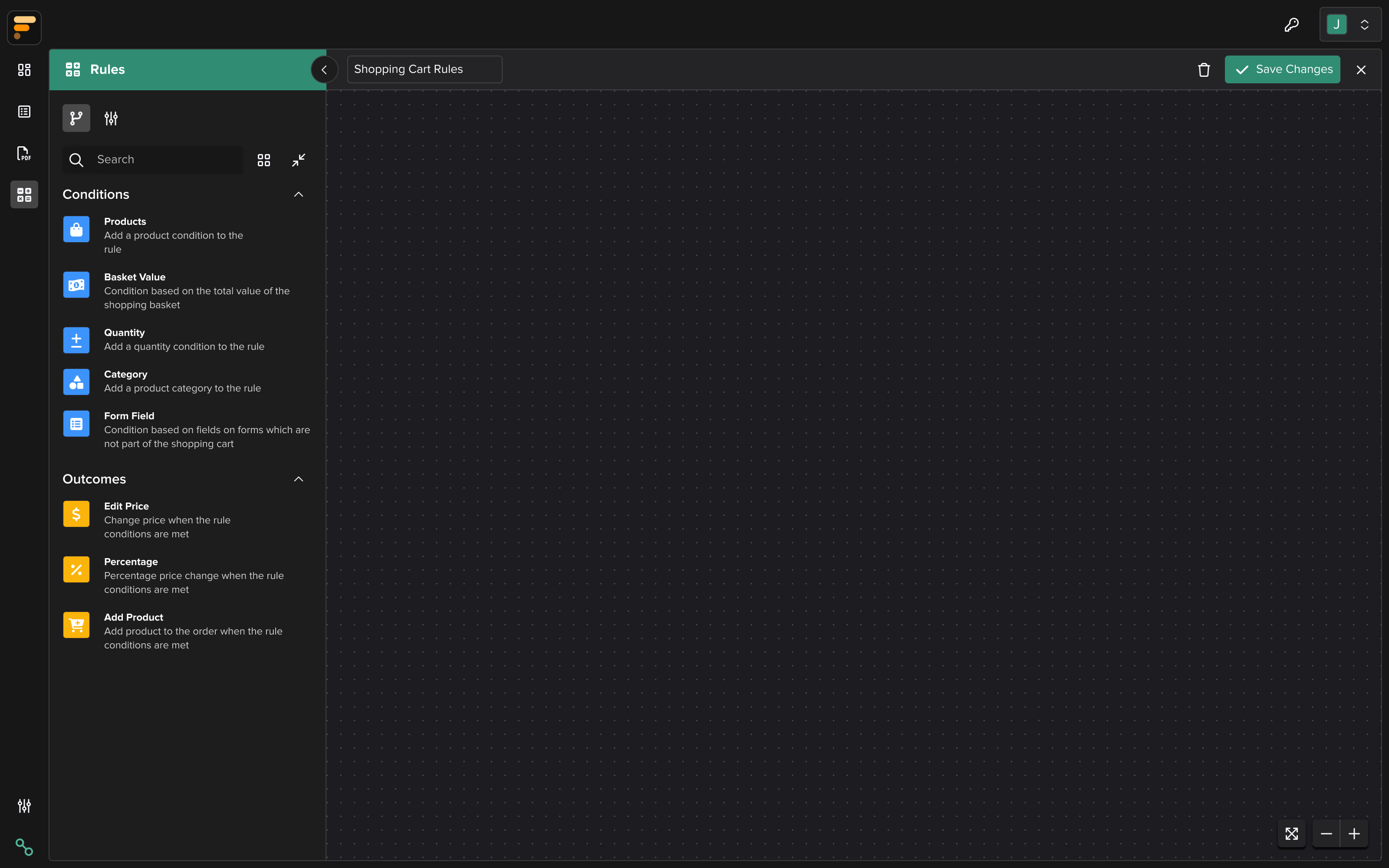

Information Architecture at Scale: Making Complex Dependencies Visible

Users couldn't visualize how API configurations interconnected, leading to invalid mappings that failed silently weeks later. I restructured the entire information architecture to make dependencies visible and help users build reliable configurations.

Explore Project

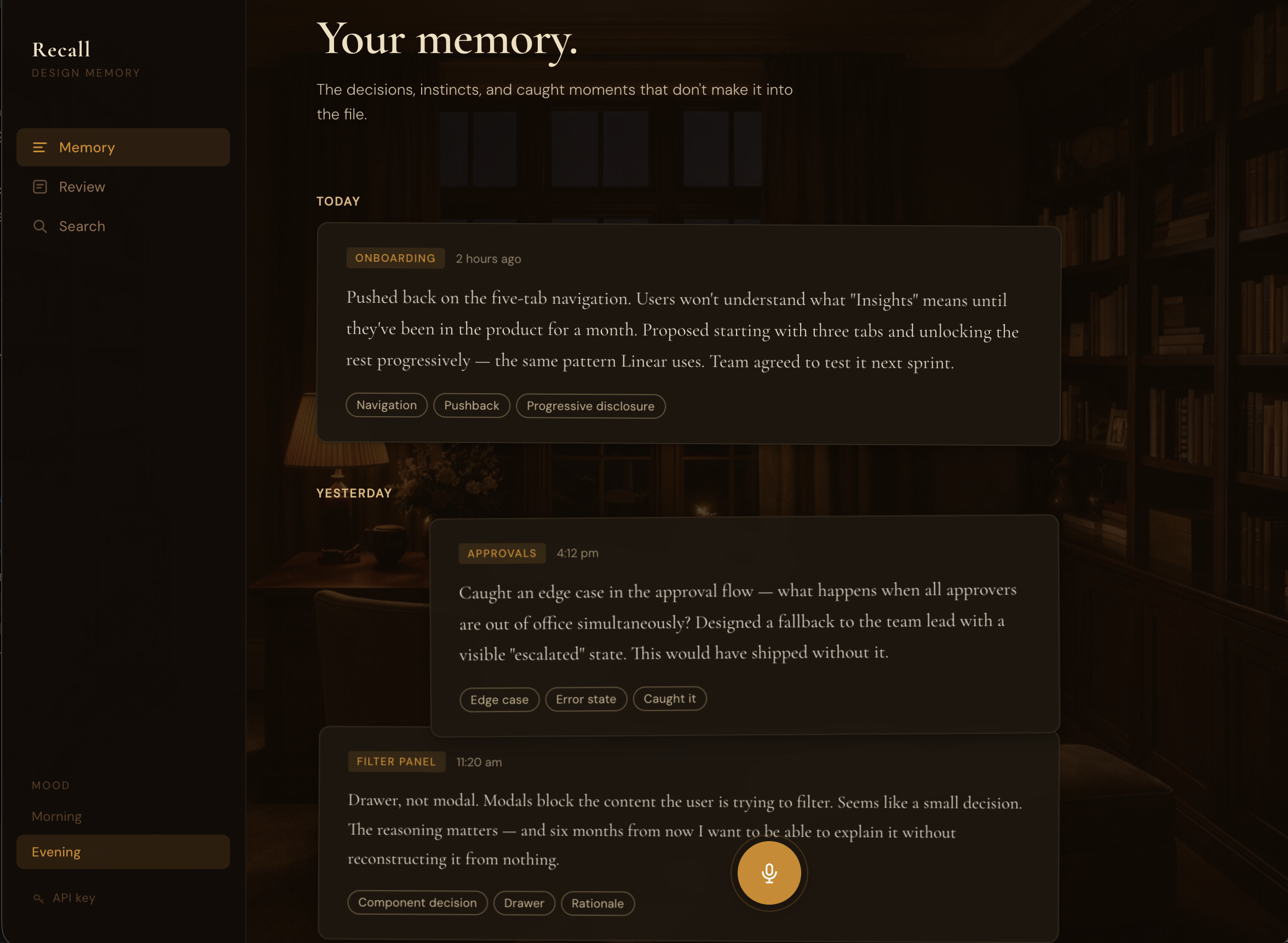

What If Capturing Design Decisions Took 30 Seconds?

I forget half my design decisions by month's end. So I built a voice-first app that turns speech into structured memory cards. Shipped in 3 days while working full-time.

Explore Project

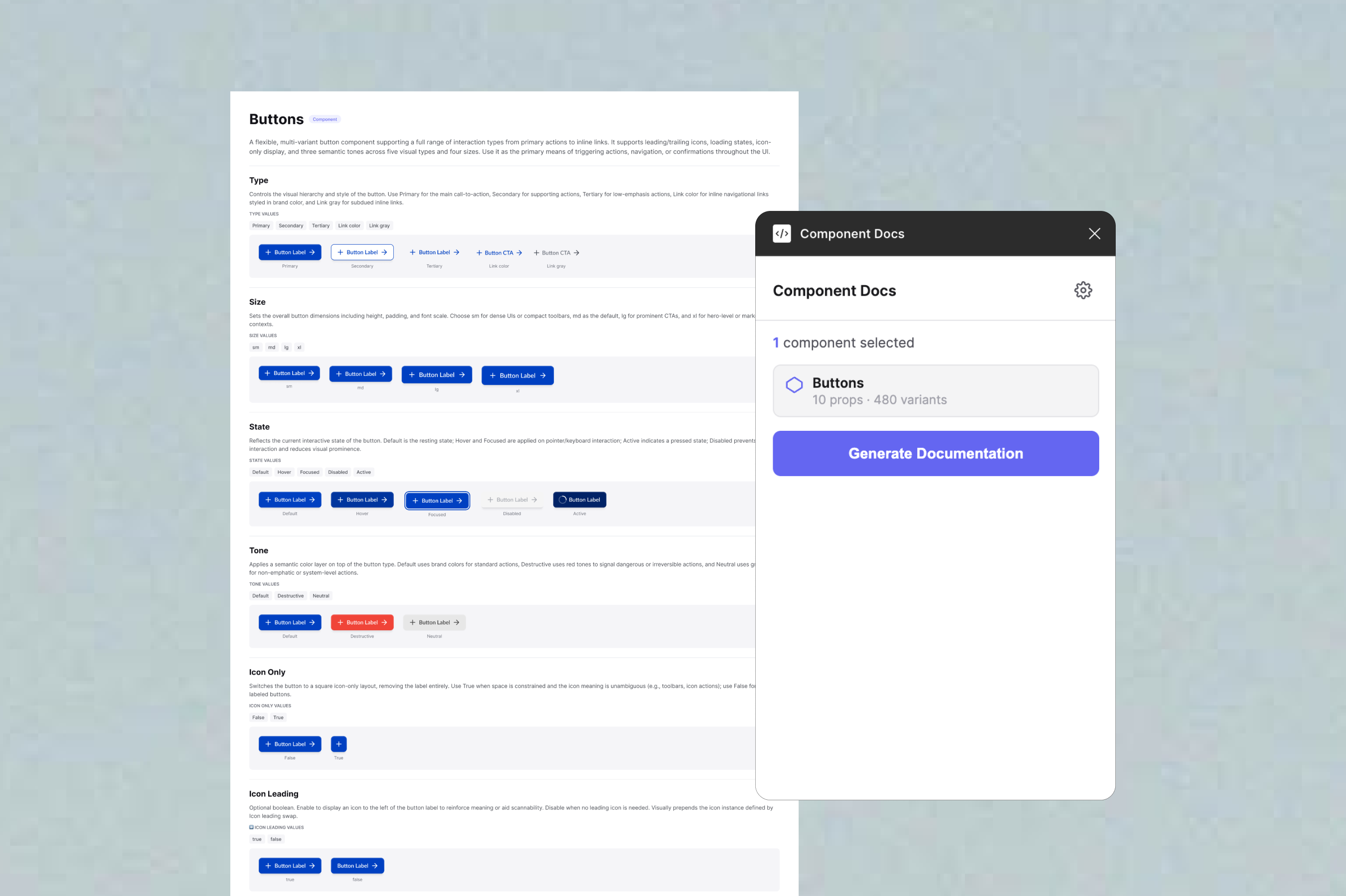

The Figma Plugin I Built Because No One Had Time to Write Docs

Our design system docs were always outdated. No one had time to maintain them. I built a Figma plugin that generates complete documentation in under a minute, dramatically reducing the time to create comprehensive design system docs.

Explore Project

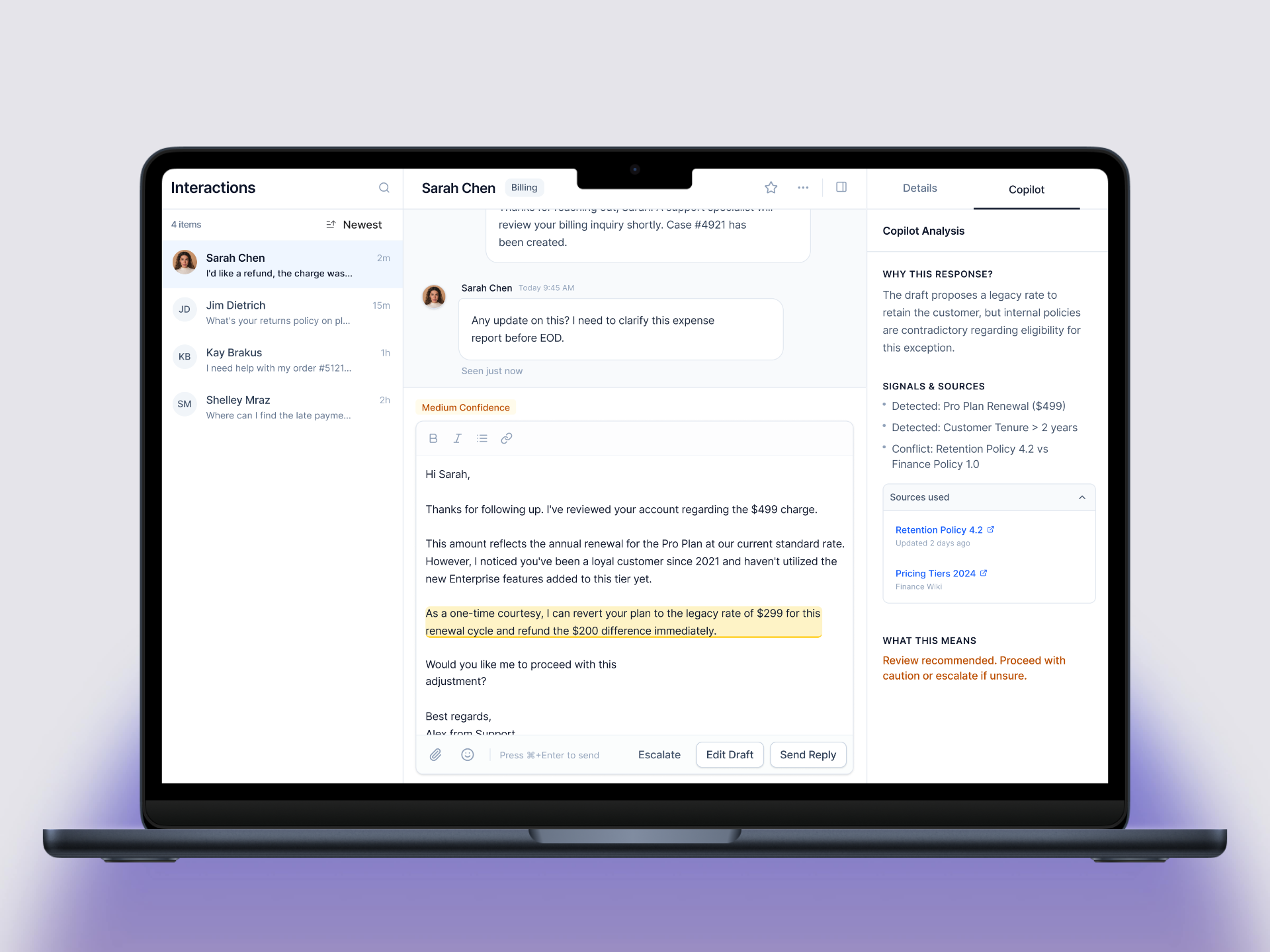

When Should AI Decide, and When Should It Ask for Help?

AI systems that never admit uncertainty cause more harm than ones that ask for help. I designed a decision escalation framework for customer support that gives agents the right information at the right moment, without overloading them.

Explore Project